We currently exist in a world of instant dopamine, artificial intelligence (AI), and profit-at-all-costs algorithms.

Spend enough time on the internet and you’ll find essay after essay, usually by somebody running an AI start-up, who says AI is coming for all of our jobs and will take over the world.

Their solution: “Go ALL IN on using AI or get left behind.”

While I agree that AI is getting “smarter,” and will upend a lot of jobs and industries…

I’ve personally drawn the exact opposite conclusion for how to proceed.

This isn’t an essay about whether we should or shouldn’t use AI. “AI” is dozens of different things, in different industries, and cannot be painted with one broad stroke. It will have unexpected benefits and consequences that we cannot know yet.

This also isn’t an essay about whether or not we’re in a bubble, because I think the AI Genie is out of the bottle either way.

I don’t fault people for using AI to make parts of their job easier, though I do worry how bosses and companies will just force employees to do more and more with less and less.

This isn’t a rallying cry to throw our computers in the dumpster like Ron Swanson either.

This is an essay about choosing to do hard things, even when AI can do it far more quickly and with much less effort.

Let’s get my personal bias out of the way:

This is an essay by me, an imperfect human, who makes my living thinking deeply about life, having human experiences, and then writing about those things, using my human brain and human hands, to connect with other imperfect humans.

With that out of the way, please free to listen to the Flaming Lips while reading the rest of this essay:

Frank Herbert: outsourcing our brain to computers is dangerous

In the world of Frank Herbert’s Dune, technology advanced so much that people started outsourcing their decision making to computers.

Unsurprisingly, this had dire consequences:

“Once men turned their thinking over to machines in the hope that this would set them free. But that only permitted other men with machines to enslave them.”

This led to the Great Revolt, where all computers were destroyed. It “took away a crutch” and “…forced human minds to develop.”

Herbert wrote Dune in 1965, but its cautionary tale has probably never been more relevant than it is right now.

Of course, we all outsource quite a bit to computers these days.

I use Google Maps instead of memorizing every route before I start driving. I use a calculator instead of doing long-form math. I use Excel formulas instead of filling out each individual cell in a spreadsheet.

But I try really really hard to not outsource my thinking:

We’ve reached the point where algorithms and AI have essentially removed the need for us to do any critical thinking if we don’t want to.

Algorithmic gods determine which social media posts and articles and shows we see (deciding which reality we exhibit). These algorithms only have one goal: to keep us on their platform longer, to serve us more ads, and to extract more value from us.

In a similar vein, AI has given us the most alluring opportunity yet to outsource our brains completely. Rather than thinking deeply to solve a problem, reading multiple books or studies to create an informed opinion, we can simply ask ChatGPT or Claude and get that answer in seconds.

Let’s set aside the fact that AI still gives plenty of confidently incorrect hallucinations, which requires critical thinking to identify.

My concern with this stuff largely echoes the concern Frank Herbert wrote about in Dune.

I worry about my ability to think critically if I let algorithms and AI decide everything for me. These few companies are all run by a single dude with veto power. As Professor Cal Newport said in his recent New York Times Essay:

“I’m done ceding my brain — the core of all that makes me who I am — to the financial interests of a small number of technology billionaires or the shortsighted conveniences of hyperactive communication styles.”

Here’s another important part: if everybody uses the same AI systems that all pull the same sources to derive the same conclusion, the only way I can remain an independent thinker is to derive my own conclusions from my own hard work, boots-on-the-ground research, and lived experiences.

I know. Choosing this harder path is both time-consuming and frustrating. Being bad at something and struggling to find the answer requires way more effort than typing a question into ChatGPT and getting an answer in two seconds.

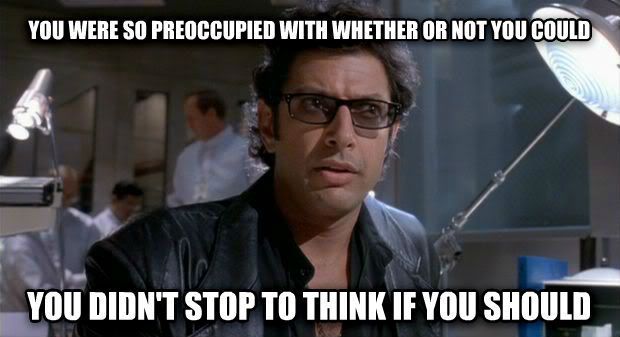

But just because we can doesn’t mean we should.

“Easier” doesn’t always mean better.

It also doesn’t mean we’ll end up with the correct result.

Choosing to do hard things can have unforeseen benefits, if we’re willing to do the heavy lifting ourselves.

Brandon Sanderson: doing hard things has intrinsic value

In Brandon Sanderson’s talk, “We are the Art,” he explains why he was so proud to have written lots of bad books early in his career, books that were never published.

He talks about his goal for writing those books:

“It wasn’t to produce a book to sell. It was for the satisfaction of having written a novel, and feeling the accomplishment of learning how to do it.”

After watching this video, it called to mind another favorite Sanderson quote about choosing to do creatively-challenging work:

“I can do hard things. Doing hard things has intrinsic value, and they will make me a better person, even if I end up failing.”

Fulfilling outcomes in life can often only come at the result of choosing the harder path.

Rewards can have more meaning precisely because they require more human effort to get there:

- We can drive a car 26.2 miles, but choosing to run/jog that exact distance is the point, and makes crossing that finish line so much more fulfilling.

- We can use a forklift to lift something heavy, but we also go to the gym to deadlift to build strength and muscle. Lifting the weight ourselves is the point.

- We can use AI to summarize War and Peace, or use AI to “write a book like War & Peace,” but reading the book is the point. Writing the book is the point.

Of course, there’s time and place to use a forklift, take alternative modes of transportation, or summarize a document. Not everybody wants to be an author, and some people (myself included) don’t want to run marathons. Great! I also know AI will make lots of annoying busy work obsolete!

Great.

I’m specifically talking about people who choose the easy path to get the end result quickly, and then wonder why it didn’t magically fulfill them or solve their problems.

Sometimes, doing the hard thing imperfectly is the point.

It’s how we develop a viewpoint, cultivate taste, get better, find our voice, challenge ourselves, feel a sense of accomplishment, and meet others who have chosen the same struggle alongside us.

As Joshua Heath Scott writes about in his essay on documentarian Ken Burns, it’s the commitment to craft that cannot be rushed, even when there are easier paths available:

Burns spent five and a half years on the Civil War. Ten and a half on Vietnam. He started The American Revolution in December 2015, when Barack Obama had thirteen months left in his presidency. The film just premiered in 2025. That’s not slowness — that’s commitment to the thing you cannot get by simply maximizing your productivity or churning out your work.

In a world of sterile, optimized, algorithmic perfection, actively choosing to struggle with a certain task for a long period of time might be the most important, human thing we can do with our time.

Even if we fail at that task, because we stand on the shoulders of our past failures. We never go back to Square One.

C.S. Lewis: Let “the bomb” find us doing sensible and human things

In 1948, C.S. Lewis wrote an essay called On Living in an Atomic Ageexplaining how he’s preparing for a potential nuclear apocalypse:

“If we are all going to be destroyed by an atomic bomb, let that bomb when it comes find us doing sensible and human things – praying, working, teaching, reading, listening to music, bathing children, playing tennis, chatting to our friends over a pint and a game of darts – not huddled together like frightened sheep and thinking about bombs.

They may break our bodies (a microbe can do that) but they need not dominate our minds.”

Swap out “atomic bomb” with “artificial intelligence,” and we have a thoughtful quote about how to spend our time in 2026, even as we race ever closer to Artificial General Intelligence (AGI) and Skynet and the Matrix.

One day, we might get taken over by the robots.

That is going to happen whether or not I “get good” at AI.

If AI is getting better and faster and smarter by the day, then trying to get better at using AI is kind of like like training for a footrace with millions of other people, except we’re all racing against a self-driving car that can go 200mph.

Why would I try to win that race?!

Personally, instead of trying to get better at AI, or worrying about whether or not AI is going to break humanity, I’m going to keep my focus on the things that make me precisely human.

Doubling down on what makes me a weird human

I began work on this newsletter three weeks ago.

It started out as a short, fun essay about working with my hands in the real world instead of on the computer.

That evolved into this other recently published essay.

I then saw the Dune Part 3 trailer, and Herbert’s Dune quote popped into my mind. This essay then started to take a different focus, so I went for a few walks while rewriting it in my head.

I spent a few mornings writing and rewriting and reshaping it. Last week, I rewrote the middle section. Today, I hit “publish.”

Sure, I could have fed my 17+ years of essays into ChatGPT. Oh, who am I kidding? All of my essays and books have already been vacuumed up by AI without my consent.

But I could have simply typed a prompt like: “Write an essay in the voice of Steve Kamb about doing hard things” and it would spit out something that kind of sounds like me.

That defeats the entire purpose.

Writing IS the point for me! I want to get better as a writer, to feel the satisfaction of hitting “publish” on an essay I worked really hard on and thought a lot about for a long time. These words are the unique combination of my 40+ years of life, 17 years of full-time writing and research, and treating writing as an important craft.

Would AI have pulled those exact quotes from these three specific fantasy authors to use in my essay?

Unlikely, because I didn’t know I was going to quote them when I started writing.

My brain decided to recall the important experience of rereading Dune two years ago when I saw that Dune Part 3 trailer.

I’ve reread Brandon Sanderson’s Mistborn trilogy three times over the past decade, which led me to subscribe to his Youtube channel, and eventually to his advice to writers and his recent talk on AI.

I read C.S. Lewis’s Narnia series as a teenager, and at some point over the past year, seeking wisdom in a chaotic world, I started reading his nonfiction essays and learning more about his worldview and philosophy.

I didn’t read these books and essays to find quotes for this essay.

I read (and reread) these books over the past 20+ years because I am a human who enjoys reading other humans and chased my curiosity.

It was all of that, plus my lived experiences, that caused my brain to subconsciously connect the dots months and years after the fact while writing this essay.

It feels like magic, and I love when this happens.

Nothing makes me happier than my brain suddenly recalling a random thought or quote that connects the dots while I’m writing. Even if it causes me to rewrite the whole thing.

Using AI to write and think for me would rob me of this joy and fulfillment. It would prevent me from developing as a writer and improving my taste.

I’m a writer who writes to think and find my voice and opinion. I’m always changing.

Therefore, I have to constantly remind myself to choose the harder, more frustrating path: reading widely, going for walks, sitting alone with my weird thoughts at my computer for a long time, and seeing what magic comes out the other side.

My human-sized bet on my future

AI is a tool that can create and destroy. It will upend old industries and spawn brand new ones. The disruption is already here.

I appreciated this video essay from Andreas Hem: it’s a thoughtful critique of AI-generated video compared to real video editing and production.

I’m still evaluating my relationship with it as a tool, which tasks I’m willing to let it handle for me, and which ones I’m perfectly content keeping to myself. Which “busy work” tasks I’m willing to let it handle.

But when it comes to creativity and thinking…

I’ve chosen to bet my future on myself and my fellow humans.

I feel an aversion to AI-generated video, AI-generated music, and AI-generated art, because they remove the reason I feel a connection to film and art and music in the first place: a human took time out of their day and worked hard to make it.

Just as a handcrafted piece of furniture can co-exist alongside mass-produced cheap furniture, I hope human-crafted content will retain its value alongside infinite, artificially-created content.

I think there will always be a need for shared experiences, in-person events, handcrafted pieces of art, imperfectly created essays, well-written books, songs that surprise and delight…

We love these things precisely because of their imperfect humanity. Because we are humans who crave connection to other humans.

That’s where I’m planting my flag:

Instead of trying to get better at AI, I’m doubling down on the stuff that makes me human.

I’m going to focus even more on connecting with real people in a real, imperfect way. I’ll go out of my way to make human connections through my art and writing, and hope it reaches other humans who value those things too.

How to Try Again Corner: how my book came to be

Three years ago, I signed a deal to write a book, How to Try Again, which hits bookshelves in June.

After fifteen years of publishing 1,000 research-backed essays for Nerd Fitness (all of which have been absorbed by the robots), I demoted myself twice in my own company and spent the last three years focused on writing this book.

I spent the first six months writing 150,000 words of chaos. I then spent another 2.5 years painstakingly rewriting, and reworking each chapter. I had other thoughtful humans provide feedback, challenge my assumptions, and push back on my arguments.

I kept chiseling away at what didn’t work to reveal the final book (I think it was my 21st draft that ended up being the final one).

Like a piece of hand-painted artwork, I’m hoping it carries more weight precisely because I took the human path over 20 drafts to bring it to life.

Once the manuscript was complete, I spent a week in a recording studio reading for the audiobook version – I want people to hear the heart and soul I put into this book. Also, I knew I had to be one to correctly deliver the bad jokes.

My goal is to have people thinking: “Damn, this Steve dude is one weird cat. Also, I feel like I know him, and it’s clear he really cares about helping people.”

From one weird, lovingly imperfect human to another, I hope these words help you think a bit differently.

And I hope when the robot overlords come for us, they find us “doing sensible and human things…”

-Steve (imperfect human)

PS: If you want to discuss this essay, you can do so in the comments over on Substack!